Facebook introduces a new deepfake tracking method that aims to identify the generative AI model behind the fake.

As the operator of the world’s largest social media platforms, Facebook has a vested interest in recognizing deepfakes and thus regulating their distribution to Instagram and Co. A significant deepfake glut has yet to occur, but the number of deepfakes on the Internet has increased exponentially by the end of 2019, and technology is constantly getting better and more accessible.

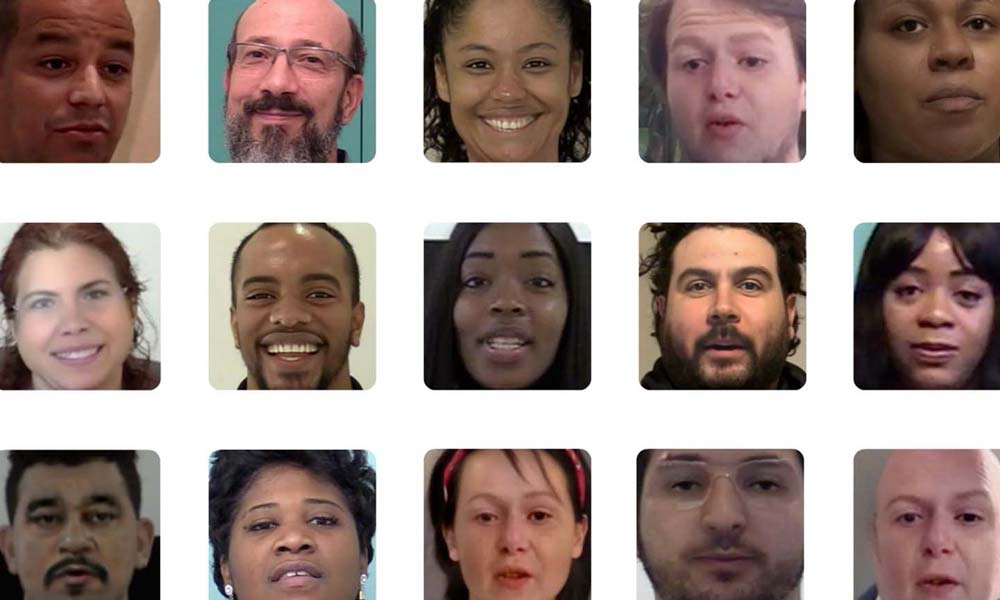

In the past, Facebook launched an anti-deep counterfeiting research initiative and published, among other things, a comprehensive dataset with which researchers could test their detection methods.

At the beginning of January 2020, Facebook banned deepfakes on its own platforms, provided that these videos were explicitly created using artificial intelligence or machine learning and not satirical or satirical. Thus, Facebook has given a special deepfake website in its handling of fake content.

Deepfake models: Like photo cameras

Facebook is now introducing a new discovery method that can be able to detect deepfakes based on the model in which they were created. Facebook researcher Tal Hasner compares the method to image identification procedures by which forensic scientists can identify the origin of an image based on the characteristics of the camera the image was taken with.

Facebook researchers used this “reverse engineering” approach to detect deepfakes: They run a potential deepfake image through a so-called Fingerprint Estimation Network (FEN), which supposedly detects the smallest traces of the model in which the image was created. . Footprint estimation takes into account factors such as the size of the footprint, its repeating structures, frequency band, and coherent frequency response.

The left half of the image shows fake fingerprints, while the right side shows the associated frequency spectrum. | Photo: Facebook AI

AI learns the best deepfake patterns

The researchers trained their detection algorithm unsupervised (explained) based on a self-aggregated data set with fingerprints from currently known deepfake models. After training the AI, the algorithm was also able to identify generative models that were not part of the training data set.

“If this was a new paradigm of AI that no one had seen before, there wouldn’t be much that we could say about it in the past. Now we can say: “Look, the image uploaded here, the image uploaded there, they are all from Same model,” explains Hasner the edge.

Facebook researchers describe their recognition rate as much better than chance and on par with other current recognition methods. The method must also be applicable to any kind of deep forgery such as text replacement, i.e. it generalizes better than previously known methods for face recognition.

AI Research publishes on Facebook its code and trained AI model bye github. In the course of the research, Facebook also created a catalog that currently contains 100 different models of deepfakes.

According to Hasner, whether and when the deepfake detection algorithm can be used on Facebook platforms.

Deepfake detection remains a cat-and-mouse game

However, the particular challenge with deepfake technology is that the diversity of generative AI models is much greater than the camera analogy used by Hasner and that blending methods of different fakes are possible.

In addition, the choice of generative models is constantly increasing, and technology is developing rapidly. And Facebook tested its own algorithm solely on the basis of its own data sets – it’s impossible to predict the rate of deepfake detection it can consistently achieve outside of lab conditions.

It is therefore unclear whether large social platforms such as Facebook or YouTube can win the cat-and-mouse game of deepfake detection. Artificial intelligence researcher Hao Li assumes that sooner or later deep fakes will reach visual perfection and then no longer contain any externally recognizable traces, and are simply indistinguishable from the original – even with the best algorithm.

swell: Do you have a FacebookAnd the CNBC; Cover photo: Facebook AI

Read more about deepfake scams:

Deepfakes: Facebook introduces new tracking technology Last update: June 17, 2021 by

“Certified tv guru. Reader. Professional writer. Avid introvert. Extreme pop culture buff.”

More Stories

AI-powered traffic lights are now being tested in this city in Baden-Württemberg.

The use of artificial intelligence in companies has quadrupled

AI Startup: Here Are Eight Startup Ideas